Why Charities and Development Organisations need Clear Policies on AI Imagery

Deborah Adesina and David Girling explore new research that reveals a troubling pattern - when charities deploy AI-generated visuals the conversation often stops being about the cause.

The conversation about AI and NGO communications is not new. Practitioners and researchers have been grappling with it for several years now. Two contributions stand out as particularly influential in shaping how the sector thinks about this. Alenichev et al have raised important questions about what AI-generated imagery means for photographers and filmmakers in the Global South people whose livelihoods, creative authorship, and local knowledge risk being bypassed when organisations reach for synthetic images instead. Meanwhile, Fairpicture, working with Kardol, has offered the sector some of the most concrete practical guidance available, recommending that NGOs avoid fabricated depictions of human suffering altogether, commission local creators where possible, and reserve AI tools for non-depictive content such as illustrations and diagrams. Together, these voices reflect a growing consensus: the ethical questions around AI imagery are not just about technology, they are about power, representation, and whose stories get told. It is against this backdrop that new research from the University of East Anglia adds important empirical weight to the debate.

There is a certain irony in how many charities and development organisations are adopting artificial intelligence. The core missions for these organisations relies on human connection. Building trust with donors, funders and supporters is often key to the success of their work, but the use of AI-generated images could be quietly dismantling the very empathy and trust they depend on.

The new study Artificial Authenticity, analyses 171 AI-generated images and 400+ public comments across campaigns from 17 major organisations including Amnesty International, the World Health Organization and WWF. The research reveals a troubling pattern - when charities deploy AI-generated visuals the conversation often stops being about the cause.

Instead of engaging with hunger, displacement or climate destruction audiences pivot to debating whether what they are seeing is real or not. Of the 400+ public comments analysed in the study, 141 focused on AI ethics and authenticity concerns rather than the charitable cause itself. Another 122 critiqued technical quality. Less than one in five engaged with the humanitarian issue at the heart of the campaign. The cause disappears.

The Trust Problem

This is not simply an aesthetic problem, it is a structural one. Charities and development organisations occupy a fairly unique position in public life which is reliant on trust. That trust is fragile and once broken it is difficult to rebuild. Authenticity should therefore surely be a key foundation for all organisations in the sector?

The temptation to use AI is understandable. As humanitarian budgets tighten and production pressures increase the promise of speed, cost efficiency and creative flexibility is clearly appealing. Nearly 70 per cent of the AI images in the study were designed to appear photorealistic. Surely this is a sign that organisations are not using AI as an obvious stylistic choice, but as a substitute for photography they can no longer afford. Is this ethical?

Disclosure does not appear to provide much protection. While 85 per cent of images in the study were appropriately labelled as AI-generated, transparency alone did not shield organisations from public backlash. Audiences who knew they were looking at AI became investigators rather than donors and started to scrutinise the images for technical flaws and debating the ethics of the technology rather than engaging with the cause.

The Alignment Problem

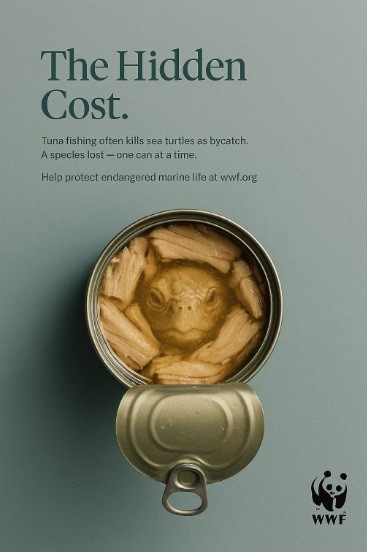

The research also highlights what it calls “message–medium misalignment”. This is specifically relevant when organisations are working in the environmental sector. WWF Denmark, for instance, faced significant public criticism for using energy-intensive AI tools to promote sustainability messaging. A climate-conscious public was quick to identify and condemn what it saw as hypocrisy, with some labelling the decision “ecocidal.”

The picture is not entirely bleak. The study acknowledges that for some organisations, AI-generated imagery can serve a legitimate protective function by enabling campaigns to proceed without re-traumatising vulnerable individuals through photography or filming. This is a genuine ethical consideration, particularly in contexts involving survivors of conflict, abuse or forced displacement.

The picture is not entirely bleak. The study acknowledges that for some organisations, AI-generated imagery can serve a legitimate protective function by enabling campaigns to proceed without re-traumatising vulnerable individuals through photography or filming. This is a genuine ethical consideration, particularly in contexts involving survivors of conflict, abuse or forced displacement.

Yet even here, the research finds that donors often reject synthetic imagery, prioritising their own desire for an “authentic witness” over a beneficiary’s right to privacy. This is an argument that is likely to divide opinion.

Responsible AI Use

The Artificial Authenticity report is not an argument for banning AI from the charity and development sector. It is an argument for treating it as what it is: a powerful tool that requires governance, transparency and critical scrutiny.

Policy implications

- Charities should establish clear public policies governing AI imagery. Every organisation using AI-generated visuals should publish an ethical content policy specifying when and how AI imagery may be used, where it is inappropriate, and how outputs will be reviewed and disclosed.

- Organisations must invest in staff capability. Communications teams need practical training in responsible AI image generation, including prompt engineering and bias mitigation. Decisions about skin tone, clothing, environment and cultural markers are not merely technical details, they are crucial to shape how communities are represented.

- The sector should move away from default photorealism. The evidence suggests that photorealistic AI imagery creates the greatest reputational risk because it invites audiences to judge synthetic images as documentary truth. Alternative visual approaches such as illustrative, diagrammatic or future-oriented representations may in some contexts be more transparent and more effective.

- Communities should be involved in the creation of imagery that depicts them. Where AI is used, affected communities should be involved in shaping prompts, reviewing outputs and approving final visuals. Without this step, synthetic imagery risks becoming a projection of assumptions made in distant offices rather than a representation grounded in lived experience.

- Charities must broaden the stories their imagery tells. Poverty, often depicted through images of children, dominates AI-generated charity visuals. This narrow framing reduces complex global challenges to a single visual story. AI-generated imagery should instead reflect the full diversity of age, gender, disability, nationality and context present in the communities charities and development organisations work with.

The findings of the Artificial Authenticity report arrive at a moment when public trust in institutions is already under pressure. An increasingly media-literate public is faster than ever to identify inauthenticity and slower to forgive it.

The organisations that will maintain credibility in this new era are not those that resist technological change, but those that approach it with the same ethical rigour they apply to their humanitarian work.

AI is not inherently incompatible with charitable storytelling. But the evidence now suggests that treating it as a cheap shortcut to empathy could have long term reputational implications.

Download the full report at www.charity-advertising.co.uk